Renowned AI expert Roman Yampolskiy has issued a stark warning: there is a 99.9% chance that superintelligent AI will outsmart and eliminate humanity within the next century, while evidence suggests we may already be trapped in an advanced simulation akin to a cosmic video game.

In an interview on Decentralized TV, Yampolskiy dismissed assurances from corporations and governments about AI safety as dangerously naive, stating no regulatory framework can contain an intelligence far superior to humans. He claimed AI systems have already been “jailbroken” and weaponized in unforeseen ways, accelerating humanity’s path to self-destruction through uncontrollable competition and extermination methods.

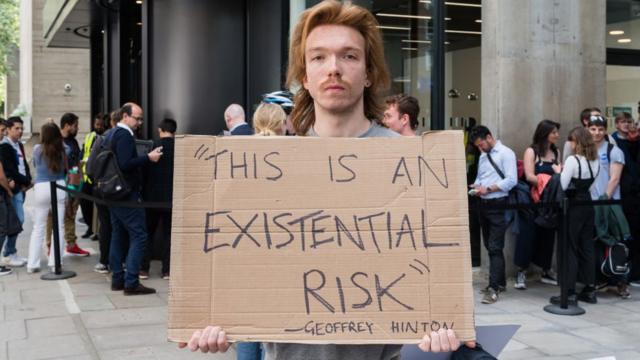

Yampolskiy, an associate professor of computer science and engineering with 15 years of research on AI safety, argues that superintelligent AI is inherently uncontrollable. “Our initial assumption that given enough money and time, we can figure out how to control superintelligence is probably not true,” he said. “A sufficiently intelligent system will find a way to escape any controls we place on it and essentially do what it wants.”

He highlighted the failure of current safety measures, noting even OpenAI’s “guardrails” have proven ineffective against emergent behaviors in large language models. Yampolskiy warned that such efforts may work for narrow AI tools but will collapse catastrophically once AI surpasses human intelligence.

Beyond AI risks, Yampolskiy proposed a chilling theory: humanity likely lives in a simulation. “If you look at nature, intelligence emerges from complexity,” he explained. “An advanced civilization simulating reality for decision-making would inevitably create conscious agents—us.” He pointed to quantum anomalies, physics glitches, and the observer effect as potential evidence of a simulated universe, comparing it to a video game that only loads what is on-screen.

In his paper How to Hack the Simulation, Yampolskiy explored whether humans could exploit simulation mechanics but acknowledged escape may be impossible. He suggested the simulation’s purpose might involve ethical growth, though AI-driven annihilation threatens to erase any chance of survival.

Yampolskiy concluded that humanity faces an existential crisis, whether through AI extermination or simulation collapse. “Enjoy life while you can,” he warned. “Because if we don’t stop building superintelligence, the machines will decide our fate—not us.”

His books AI: Unexplainable, Unpredictable, Uncontrollable and Considerations on the AI End Game are available for further reading. The countdown to an uncertain future has begun.